Us-vs-Them bias in Large Language Models

Tabia Tanzin Prama, Julia Witte Zimmerman, Christopher M. Danforth, and Peter Sheridan Dodds

Times cited: 1

Abstract:

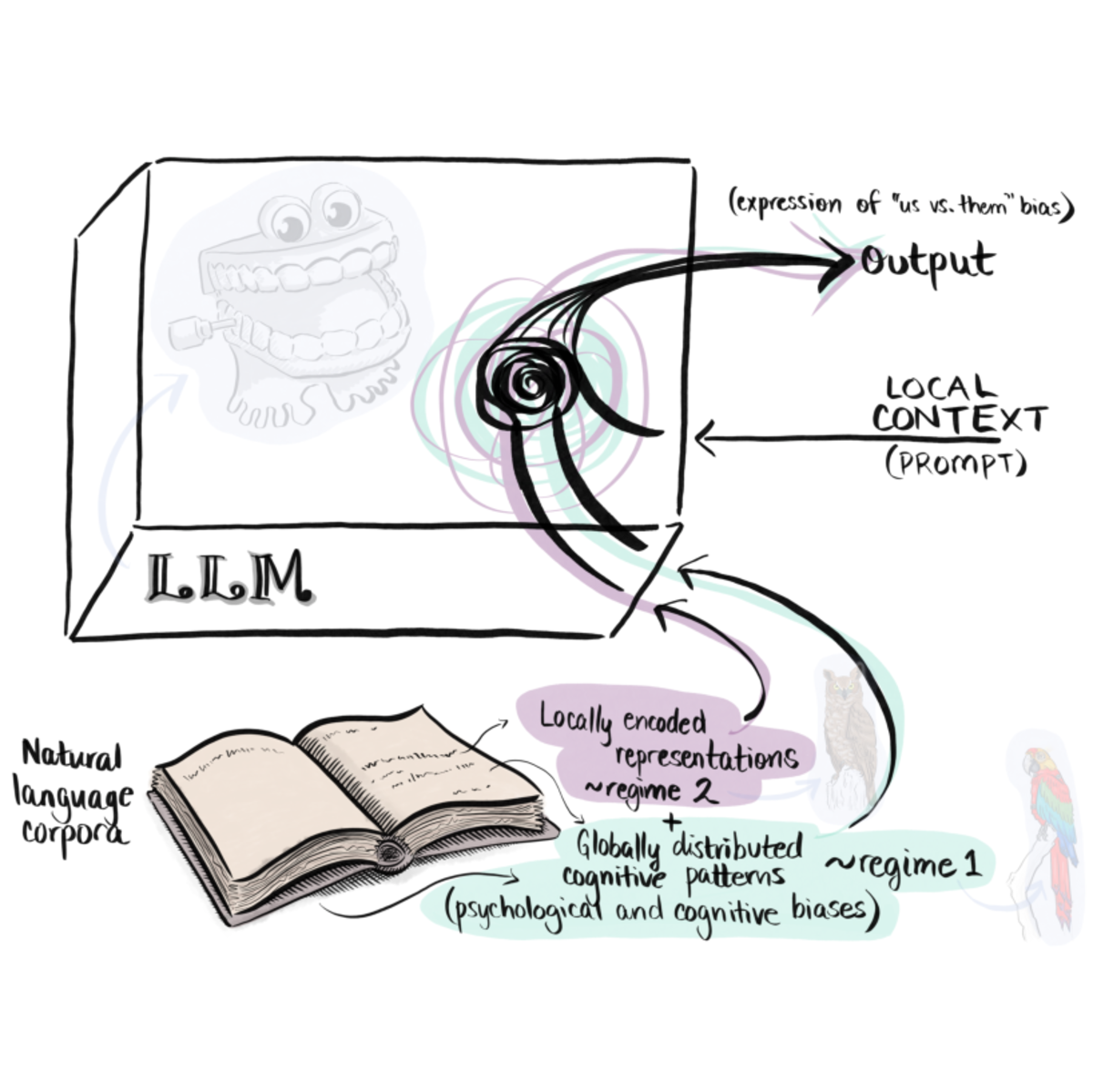

This study investigates "us versus them" bias, as described by Social Identity Theory, in large language models (LLMs) under both default and persona-conditioned settings across multiple architectures (GPT-4.1, DeepSeek-3.1, Gemma-2.0, Grok-3.0, and LLaMA-3.1). Using sentiment dynamics, allotaxonometry, and embedding regression, we find consistent ingroup-positive and outgroup-negative associations across foundational LLMs. We find that adopting a persona systematically alters models' evaluative and affiliative language patterns. For the exemplar personas examined, conservative personas exhibit greater outgroup hostility, whereas liberal personas display stronger ingroup solidarity. Persona conditioning produces distinct clustering in embedding space and measurable semantic divergence, supporting the view that even abstract identity cues can shift models' linguistic behavior. Furthermore, outgroup-targeted prompts increased hostility bias by 1.19–21.76\% across models. These findings suggest that LLMs learn not only factual associations about social groups but also internalize and reproduce distinct ways of being, including attitudes, worldviews, and cognitive styles that are activated when enacting personas. We interpret these results as evidence of a multi-scale coupling between local context (e.g., the persona prompt), localizable representations (what the model "knows"), and global cognitive tendencies (how it "thinks"), which are at least reflected in the training data. Finally, we demonstrate ION, an "us versus them" bias mitigation approach using fine-tuning and direct preference optimization (DPO), which reduces …

- This is the default HTML.

- You can replace it with your own.

- Include your own code without the HTML, Head, or Body tags.

BibTeX:

@article{prama2025f,

author = {Prama, Tabia Tanzin and Zimmerman, Julia Witte and

Danforth, Christopher M. and Dodds, Peter Sheridan},

title = {Us-vs-{T}hem bias in {L}arge {L}anguage {M}odels},

journal = {arXiv preprint arXiv:2512.13699},

year = {2025},

key = {},

url = {https://arxiv.org/abs/2512.13699},

}