LLMs for Low-Resource Dialect Translation Using Context-Aware Prompting: A Case Study on Sylheti

Tabia Tanzin Prama

Proceedings of the Second Workshop on Bangla Language Processing (BLP-2025), 2025

Times cited: 1

Abstract:

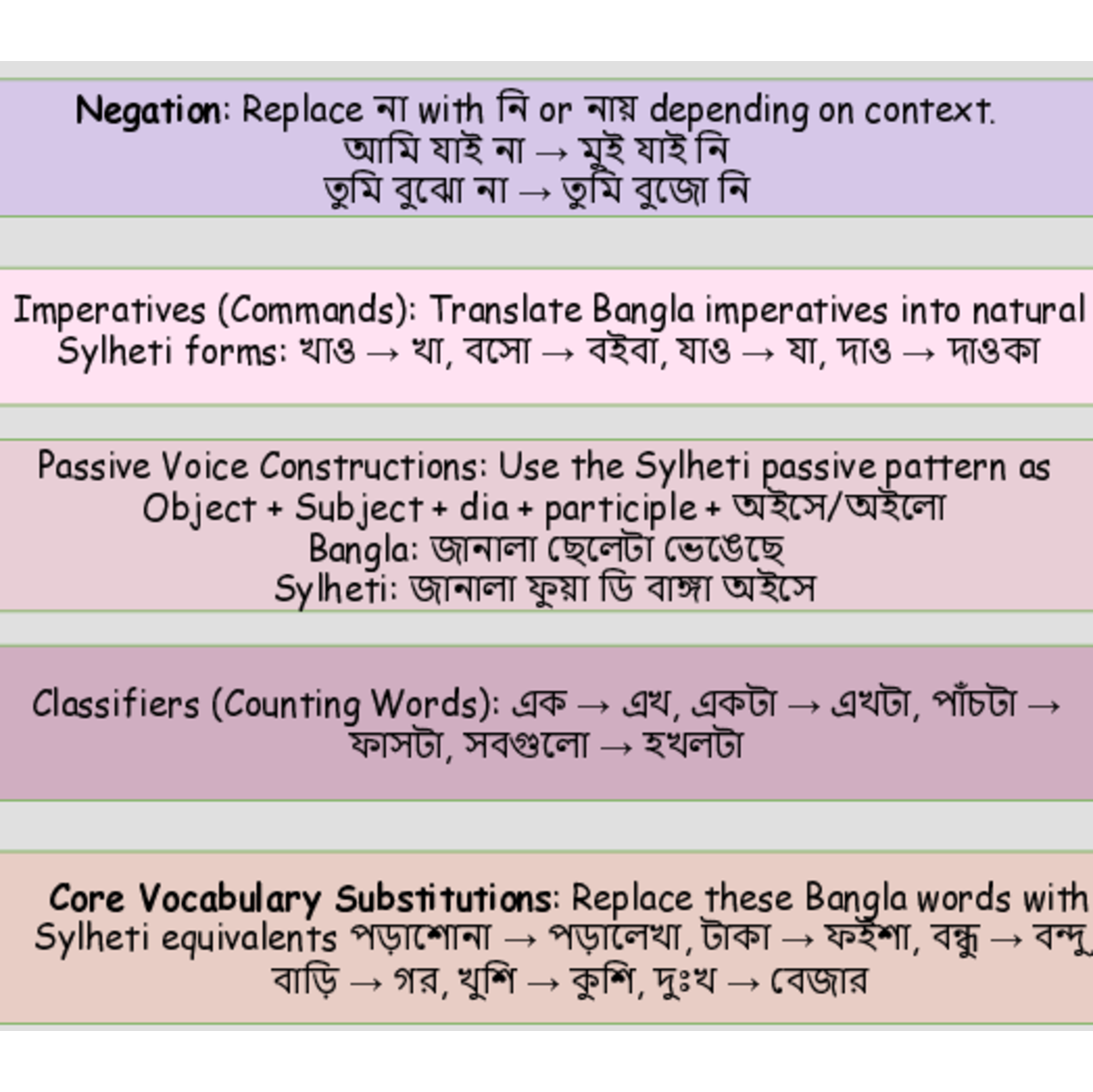

Large Language Models (LLMs) have demonstrated strong translation abilities through prompting, even without task-specific training. However, their effectiveness in dialectal and low-resource contexts remains underexplored. This study presents the first systematic investigation of LLM-based Machine Translation (MT) for Sylheti, a dialect of Bangla that is itself low-resource. We evaluate five advanced LLMs (GPT-4.1, GPT-4.1-mini, LLaMA 4, Grok 3, and Deepseek V3. 2) across both translation directions (Bangla↔ Sylheti), and find that these models struggle with dialect-specific vocabulary. To address this, we introduce Sylheti-CAP (Context-Aware Prompting), a three-step framework that embeds a linguistic rulebook, dictionary (core vocabulary and idioms), and authenticity check directly into prompts. Extensive experiments show that Sylheti-CAP consistently improves translation quality across models and prompting strategies. Both automatic metrics and human evaluations confirm its effectiveness, while qualitative analysis reveals notable reductions in hallucinations, ambiguities, and awkward phrasing—establishing Sylheti-CAP as a scalable solution for dialectal and low-resource MT.

- This is the default HTML.

- You can replace it with your own.

- Include your own code without the HTML, Head, or Body tags.

BibTeX:

@inproceedings{prama2025c,

author = {Prama, Tabia Tanzin},

title = {{LLM}s for low-resource dialect translation using

context-aware prompting: {A} case study on {S}ylheti},

booktitle = {Proceedings of the Second Workshop on Bangla

Language Processing (BLP-2025)},

year = {2025},

key = {},

pages = {292–308},

url = {https://aclanthology.org/2025.banglalp-1.24/},

}